Two months before Election Day, a photo of singer Elton John in a pink coat with the letters “MAGA” on it surfaced on social media, suggesting the global superstar had endorsed former President Donald Trump.

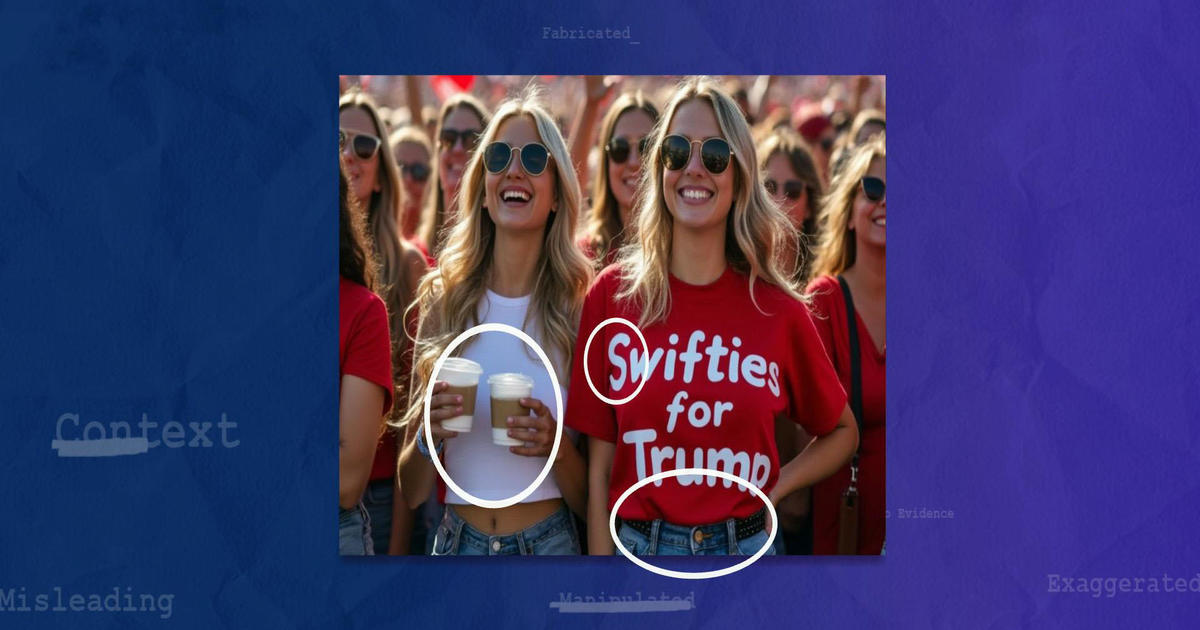

But the photo wasn’t real. It’s the latest in a series of images and videos created using artificial intelligence that aim to dupe viewers into thinking their favorite celebrities have endorsed political candidates.

Stars including Will Smith and Taylor Swift have also had their likeness used to falsely claim they are supporting Trump in the upcoming presidential election.

When Swift publicly endorsed Vice President Kamala Harris in an Instagram post, she referenced AI-generated images that falsely suggested she had endorsed Trump, adding that the incident “brought me to the conclusion that I need to be very transparent about my actual plans for this election as a voter.”

An AI-generated video of Will Smith and Chris Rock, which amassed over 700,000 views on X, showed the stars eating large plates full of spaghetti with Trump.

How to identify AI-generated images

There are three main ways to tell if an image or video has been AI-generated or manipulated, Claire Leibowicz, head of the AI and Media Integrity Program at the nonprofit technology coalition The Partnership on AI, told CBS News.

The first way is to look for airbrushing, smudging or “things that defy the laws of physics,” Leibowicz said.

The second is to find any visual inconsistencies. In the case of the Elton John image, for example, the MAGA letters on his jacket were sewn across the lapels and his glasses were too close together.

The third way to find out if an image is real is to find the original source through reverse searching online. This can be done by taking a screenshot and uploading it to Google Lens or similar tools, and the results will show whether there’s a match out there, helping you verify where it came from.

Leibowicz added that she was previously fooled by AI when a fabricated image of Pope Francis went viral online.

“This is getting harder, and we’re [going to] need journalists and other experts to really be helping us authenticate content.”

A poll conducted by the Polarization Research Lab in March found that nearly 50% of Americans believe AI will make elections worse, about 30% are unsure and 20% believe AI will improve the election process.

Sam Gregory, executive director of Witness.org, a global nonprofit that uses video and technology to protect human rights, told CBS News, “Most of the information that will mislead us around the elections is likely to be powerful leaders telling lies or misrepresenting the truth in public.”

The Department of Homeland Security released a bulletin in May warning the public of the challenges AI can create for the November presidential election, saying the “timing of election-specific AI-generated media can be just as critical as the content itself, as it may take time to counter-message or debunk the false content permeating online.”